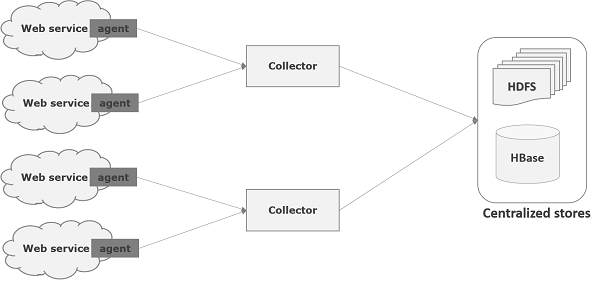

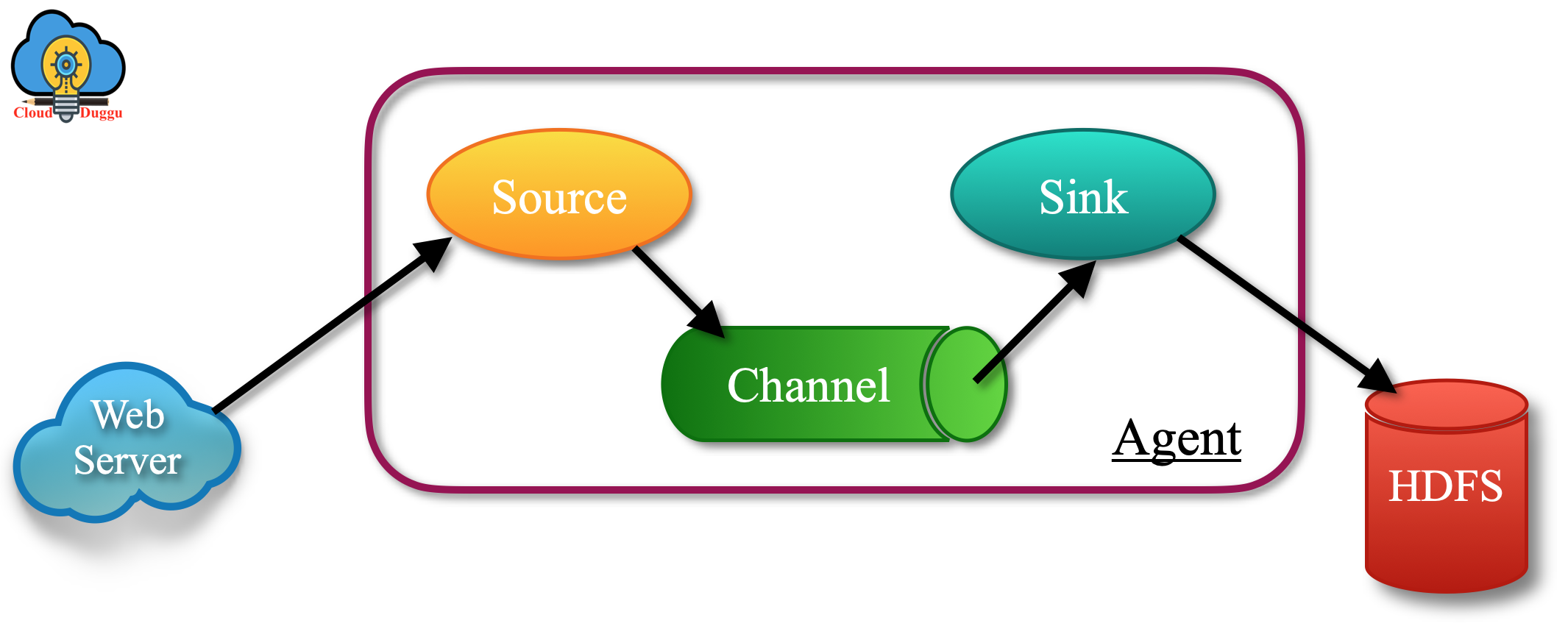

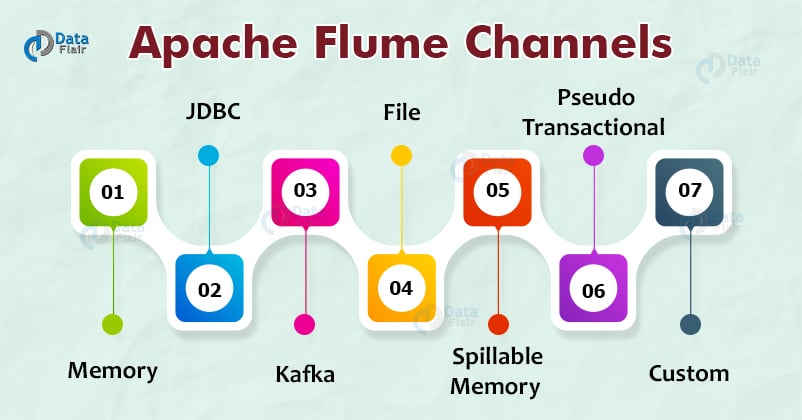

Users who are interested in Apache Flume may also be interested in Confluent and Apache Kafka, since it can be deployed to solve a lot of the same ingestion problems in addition to offering other capabilities such as real-time stream processing, broad connectivity, distributed publish / subscribe, and event-driven microservices architectures. Apache Flume is an efficient, distributed, reliable, and fault-tolerant data-ingestion tool. This speaks to the more generalized utility of Kafka as a framework. It has a simple and flexible architecture based on streaming data flows. When Flume and Kafka are integrated, data is usually moving from Flume to Kafka. Welcome to Apache Flume Apache Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of log data. It’s worth noting that although it is called a “sink” connector from the perspective of Flume, Flume usually serves as a source from the point of view of Kafka. Likewise, there is a source connector for Flume in the Kafka Connect ecosystem. Organizations that use both Flume and Kafka together have a variety of choices, such as a Kafka sink in Flume (dubbed “Flafka”) where Flume can be configured as a Kafka producer. It also provides examples and best practices for Flume users. The guide covers topics such as installation, configuration, sources, sinks, channels, and more. Moreover, Kafka’s capabilities are often considered as a superset of Flume’s. Flume 1.11.0 User Guide is a comprehensive document that explains how to use Flume, a service for collecting and moving streaming data. To this end, Apache Flume is written in an HDFS. This solution has been specially designed to handle high volumes and throughputs.

Flume is a highly reliable, distributed, and configurable tool. The Apache Flume project needs and appreciates all contributions, including documentation help, source code improvements, problem reports, and even general feedback If you are interested in contributing, please visit our Wiki page on how to contribute at.

While Flume is primarily used for log ingestion, specifically to Hadoop, for batch processing, Kafka can also be used for this. This is a tool for collecting, aggregating and moving large quantities of logs. Apache Flume is a tool/service/data ingestion mechanism for collecting aggregating and transporting large amounts of streaming data such as log files, events (etc.) from various sources to a centralized data store.